In today's data-driven world, building machine learning (ML) models is no longer the hardest part of delivering AI solutions — operationalizing those models at scale is. This is where MLOps (Machine Learning Operations) comes into play.

Inspired by DevOps practices, MLOps focuses on the deployment, monitoring, management, and governance of machine learning models in production environments. It ensures that ML systems are not just impressive in research labs, but also reliable, scalable, and valuable in the real world.

What is MLOps?

At its core, MLOps is a set of practices that combines Machine Learning, DevOps, and Data Engineering to:

- Accelerate the development and deployment of ML models

- Ensure consistent and reliable model performance

- Automate workflows around model lifecycle management

- Enable continuous integration, delivery, and retraining of models (CI/CD/CT)

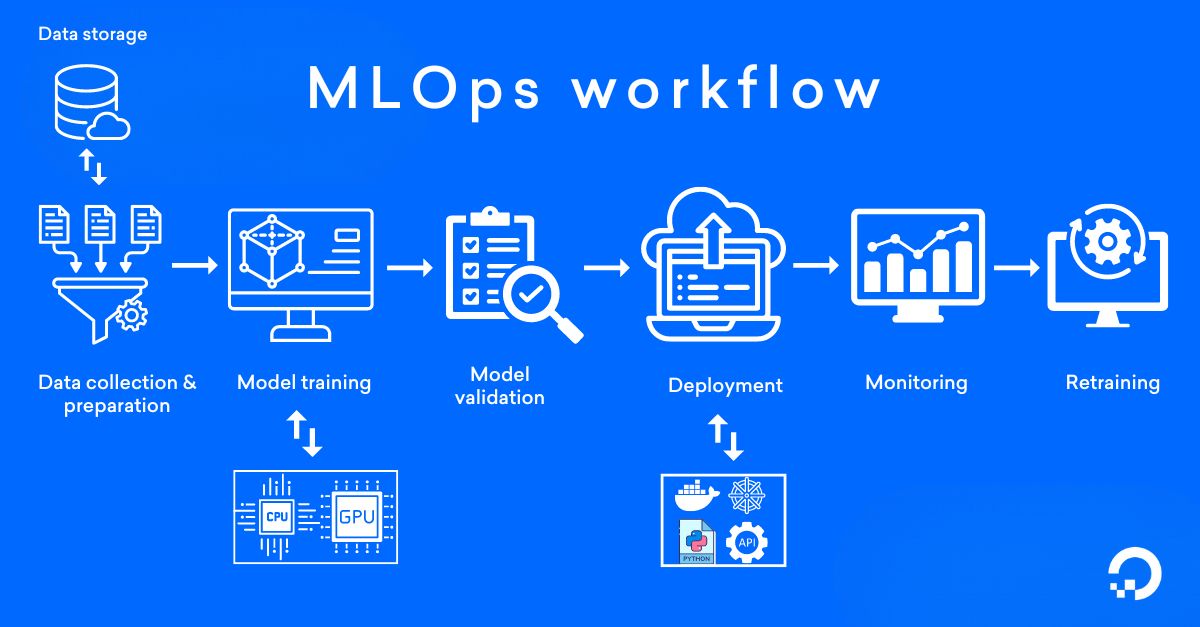

MLOps is not a single tool or framework. Instead, it’s a cultural and technological approach to managing the entire machine learning lifecycle — from data preparation and model training to deployment, monitoring, and retraining.

Why MLOps Matters

Most organizations quickly realize that building a machine learning model is just the beginning. Without robust MLOps processes, they face challenges like:

- Model decay: Deployed models lose accuracy over time as data patterns change.

- Deployment bottlenecks: Moving a model from a data scientist’s notebook to production can take weeks or months.

- Lack of reproducibility: Without proper tracking, it’s hard to understand how a model was built or retrain it.

- Operational risks: Models can fail silently in production, leading to bad predictions and business impact.

MLOps addresses these issues by enforcing automation, monitoring, governance, and collaboration across teams.

Key Components of MLOps

MLOps involves several important building blocks:

1. Model Versioning

Keeping track of different model versions, associated data, code, and hyperparameters to ensure reproducibility and easy rollback when necessary.

2. Automated ML Pipelines

Creating end-to-end pipelines that automate:

- Data ingestion and cleaning

- Feature engineering

- Model training

- Model evaluation

- Deployment to production

3. Continuous Integration and Delivery (CI/CD) for ML

Every change in code, data, or model is automatically tested, validated, and deployed with minimal manual intervention — similar to how software engineers manage applications.

4. Monitoring and Observability

Setting up real-time monitoring for: - Model performance (e.g., accuracy, drift) - Data quality - Infrastructure health This ensures issues are detected early and corrected proactively.

5. Model Governance and Compliance

Maintaining audit trails for model decisions, ensuring explainability, fairness, and compliance with regulations like GDPR, HIPAA, and others.

MLOps Tools and Technologies

Some popular tools that power MLOps include:

- Version Control: Git, DVC (Data Version Control)

- CI/CD Pipelines: Jenkins, GitLab CI, Azure DevOps

- Model Training and Experiment Tracking: MLflow, Weights & Biases, TensorBoard

- Deployment and Serving: Kubernetes, TensorFlow Serving, TorchServe, Seldon

- Monitoring: Prometheus, Grafana, WhyLabs, Evidently AI

- Workflow Orchestration: Apache Airflow, Kubeflow Pipelines, Dagster

Choosing the right combination depends on your organization’s size, tech stack, and goals.

Challenges in Adopting MLOps

While the promise of MLOps is clear, implementing it isn’t always straightforward. Organizations often struggle with:

- Organizational silos between data scientists, engineers, and IT teams

- Lack of standardization in ML workflows

- Managing highly dynamic and large datasets

- Balancing experimentation freedom with operational discipline

Building a strong MLOps culture requires cross-functional collaboration, investment in tooling, and a shift toward automation-first thinking.

The Future of MLOps

As machine learning adoption continues to grow, MLOps will evolve further to:

- Support real-time inference at scale

- Enable multi-cloud and hybrid deployments

- Provide deeper explainability and bias detection tools

- Integrate with generative AI (GenAI) and foundation model platforms

- Move toward low-code/no-code ML pipelines for faster experimentation

Ultimately, MLOps is becoming a critical enabler for companies looking to turn their ML investments into real business value.

Conclusion

MLOps is no longer a "nice-to-have" — it's a must-have for organizations serious about deploying machine learning into production.

By combining the best practices of software engineering, data engineering, and machine learning, MLOps ensures that ML projects move from isolated experiments to production-ready, continuously improving systems that deliver real-world impact.

If you're looking to make your ML models faster, safer, and smarter, investing in MLOps is the way forward.

No comments yet.